Batmaz, Anıl Ufuk

Loading...

Profile URL

Name Variants

Batmaz, Anıl Ufuk

A.,Batmaz

A. U. Batmaz

Anıl Ufuk, Batmaz

Batmaz, Anil Ufuk

A.,Batmaz

A. U. Batmaz

Anil Ufuk, Batmaz

Batmaz, A.U.

Batmaz, Anil U.

Batmaz, Anil Ufuk K.

A.,Batmaz

A. U. Batmaz

Anıl Ufuk, Batmaz

Batmaz, Anil Ufuk

A.,Batmaz

A. U. Batmaz

Anil Ufuk, Batmaz

Batmaz, A.U.

Batmaz, Anil U.

Batmaz, Anil Ufuk K.

Job Title

Dr. Öğr. Üyesi

Email Address

Main Affiliation

Mechatronics Engineering

Status

Former Staff

Website

ORCID ID

Scopus Author ID

Turkish CoHE Profile ID

Google Scholar ID

WoS Researcher ID

Sustainable Development Goals

11

SUSTAINABLE CITIES AND COMMUNITIES

0

Research Products

17

PARTNERSHIPS FOR THE GOALS

0

Research Products

14

LIFE BELOW WATER

0

Research Products

8

DECENT WORK AND ECONOMIC GROWTH

0

Research Products

15

LIFE ON LAND

0

Research Products

1

NO POVERTY

0

Research Products

7

AFFORDABLE AND CLEAN ENERGY

0

Research Products

6

CLEAN WATER AND SANITATION

0

Research Products

12

RESPONSIBLE CONSUMPTION AND PRODUCTION

0

Research Products

16

PEACE, JUSTICE AND STRONG INSTITUTIONS

0

Research Products

9

INDUSTRY, INNOVATION AND INFRASTRUCTURE

0

Research Products

3

GOOD HEALTH AND WELL-BEING

4

Research Products

2

ZERO HUNGER

0

Research Products

4

QUALITY EDUCATION

1

Research Products

10

REDUCED INEQUALITIES

0

Research Products

13

CLIMATE ACTION

0

Research Products

5

GENDER EQUALITY

0

Research Products

This researcher does not have a Scopus ID.

This researcher does not have a WoS ID.

Scholarly Output

29

Articles

5

Views / Downloads

172/172

Supervised MSc Theses

0

Supervised PhD Theses

0

WoS Citation Count

173

Scopus Citation Count

240

WoS h-index

8

Scopus h-index

10

Patents

0

Projects

0

WoS Citations per Publication

5.97

Scopus Citations per Publication

8.28

Open Access Source

4

Supervised Theses

0

| Journal | Count |

|---|---|

| IEEE Conference on Virtual Reality and 3D User Interfaces (VR) -- MAR 16-21, 2024 -- Orlando, FL | 3 |

| 22nd IEEE International Symposium on Mixed and Augmented Reality (ISMAR) -- OCT 16-20, 2023 -- Sydney, AUSTRALIA | 2 |

| Conference on Human Factors in Computing Systems - Proceedings | 2 |

| 28th Acm Symposium on Virtual Reality Software and Technology, Vrst 2022 | 2 |

| International Case Studies in Food Tourism | 2 |

Current Page: 1 / 5

Scopus Quartile Distribution

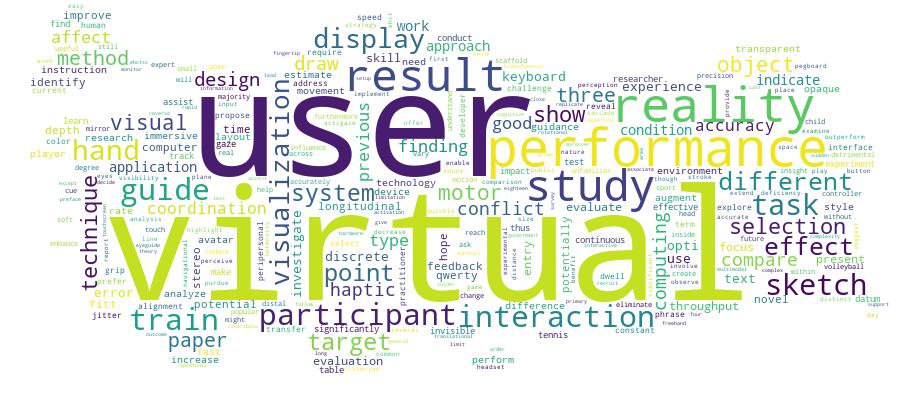

Competency Cloud

29 results

Scholarly Output Search Results

Now showing 1 - 10 of 29

Article Citation - WoS: 4Citation - Scopus: 5Evaluation of an Immersive Covid-19 Data Visualization(IEEE Computer Soc, 2023) Kaya, Furkan; Celik, Elif; Batmaz, Anil Ufuk K.; Mutasim, Aunnoy K.; Stuerzlinger, WolfgangCOVID-19 restrictions have detrimental effects on the population, both socially and economically. However, these restrictions are necessary as they help reduce the spread of the virus. For the public to comply, easily comprehensible communication between decision makers and the public is thus crucial. To address this, we propose a novel 3-D visualization of COVID-19 data, which could increase the awareness of COVID-19 trends in the general population. We conducted a user study and compared a conventional 2-D visualization with the proposed method in an immersive environment. Results showed that the our 3-D visualization approach facilitated understanding of the complexity of COVID-19. A majority of participants preferred to see the COVID-19 data with the 3-D method. Moreover, individual results revealed that our method increases the engagement of users with the data. We hope that our method will help governments to improve their communication with the public in the future.Conference Object Citation - WoS: 7Citation - Scopus: 9Effects of Opaque, Transparent and Invisible Hand Visualization Styles on Motor Dexterity in a Virtual Reality Based Purdue Pegboard Test(Ieee Computer Soc, 2023) Voisard, Laurent; Hatira, Amal; Sarac, Mine; Kersten-Oertel, Marta; Batmaz, Anil UfukThe virtual hand interaction technique is one of the most common interaction techniques used in virtual reality (VR) systems. A VR application can be designed with different hand visualization styles, which might impact motor dexterity. In this paper, we aim to investigate the effects of three different hand visualization styles transparent, opaque, and invisible - on participants' performance through a VR-based Purdue Pegboard Test (PPT). A total of 24 participants were recruited and instructed to place pegs on the board as quickly and accurately as possible. The results indicated that using the invisible hand visualization significantly increased the number of task repetitions completed compared to the opaque hand visualization. However, no significant difference was observed in participants' preference for the hand visualization styles. These findings suggest that an invisible hand visualization may enhance performance in the VR-based PPT, potentially indicating the advantages of a less obstructive hand visualization style. We hope our results can guide developers, researchers, and practitioners when designing novel virtual hand interaction techniques.Conference Object Citation - Scopus: 2When Anchoring Fails: Interactive Alignment of Large Virtual Objects in Occasionally Failing AR Systems(Springer Science and Business Media Deutschland GmbH, 2022) Batmaz, A.U.; Stuerzlinger, W.Augmented reality systems show virtual object models overlaid over real ones, which is helpful in many contexts, e.g., during maintenance. Assuming all geometry is known, misalignments in 3D poses will still occur without perfectly robust viewer and object 3D tracking. Such misalignments can impact the user experience and reduce the potential benefits associated with AR systems. In this paper, we implemented several interaction algorithms to make manual virtual object alignment easier, based on previously presented methods, such as HoverCam, SHOCam, and a Signed Distance Field. Our approach also simplifies the user interface for manual 3D pose alignment in 2D input systems. The results of our work indicate that our approach can reduce the time needed for interactive 3D pose alignment, which improves the user experience. © 2022, The Author(s), under exclusive license to Springer Nature Switzerland AG.Conference Object Citation - WoS: 40Citation - Scopus: 53The Effect of the Vergence-Accommodation Conflict on Virtual Hand Pointing in Immersive Displays(Assoc Computing Machinery, 2022) Batmaz, Anil Ufuk; Machuca, Mayra Donaji Barrera; Sun, Junwei; Stuerzlinger, Wolfgang; Barrera MacHuca, Mayra DonajiPrevious work hypothesized that for Virtual Reality (VR) and Augmented Reality (AR) displays a mismatch between disparities and optical focus cues, known as the vergence and accommodation conflict (VAC), affects depth perception and thus limits user performance in 3D selection tasks within arm's reach (peri-personal space). To investigate this question, we built a multifocal stereo display, which can eliminate the influence of the VAC for pointing within the investigated distances. In a user study, participants performed a virtual hand 3D selection task with targets arranged laterally or along the line of sight, with and without a change in visual depth, in display conditions with and without the VAC. Our results show that the VAC influences 3D selection performance in common VR and AR stereo displays and that multifocal displays have a positive effect on 3D selection performance with a virtual hand.Conference Object Citation - WoS: 8Citation - Scopus: 10Measuring the Effect of Stereo Deficiencies on Peripersonal Space Pointing(IEEE Computer Soc, 2023) Batmaz, Anil Ufuk; Mughribi, Moaaz Hudhud; Sarac, Mine; Machuca, Mayra Barrera; Stuerzlinger, WolfgangState-of-the-art Virtual Reality (VR) and Augmented Reality (AR) headsets rely on singlefocal stereo displays. For objects away from the focal plane, such displays create a vergence-accommodation conflict (VAC), potentially degrading user interaction performance. In this paper, we study how the VAC affects pointing at targets within arm's reach with virtual hand and raycasting interaction in current stereo display systems. We use a previously proposed experimental methodology that extends the ISO 9241-411:2015 multi-directional selection task to enable fair comparisons between selecting targets in different display conditions. We conducted a user study with eighteen participants and the results indicate that participants were faster and had higher throughput in the constant VAC condition with the virtual hand. We hope that our results enable designers to choose more efficient interaction methods in virtual environments.Article Citation - WoS: 1Citation - Scopus: 1The Influence of Eye Gaze Interaction Technique Expertise and the Guided Evaluation Method on Text Entry Performance Evaluations(Assoc Computing Machinery, 2025) Mutasim, Aunnoy K.; Batmaz, Anil Ufuk; Mughrabi, Moaaz Hudhud; Stuerzlinger, Wolfgang; Hudhud Mughrabi, MoaazAny investigation of learning unfamiliar text entry systems is affected by the need to train participants on multiple new components simultaneously, such as novel interaction techniques and layouts. The Guided Evaluation Method (GEM) addresses this challenge by bypassing the need to learn layout-specific skills for text entry. However, a gap remains as the GEM's performance has not been assessed in situations where users are unfamiliar with the interaction technique involved, here eye-gaze-based dwell. To address this, we trained participants on only the eye-gaze-based interaction technique over eight days with QWERTY and then evaluated their performance on the OPTI layout with the GEM. Results showed that the unfamiliar OPTI layout outperformed QWERTY, with QWERTY's speed aligning with previous findings, suggesting that interaction technique expertise significantly impacts performance outcomes. Importantly, we also identified that for scenarios where the familiarity with the involved interaction technique(s) is the same, the GEM analyzes the performance of keyboard layouts effectively and quickly identifies the best option.Article Citation - WoS: 3Citation - Scopus: 2Effects of Color Cues on Eye-Hand Coordination Training With a Mirror Drawing Task in Virtual Environment(Frontiers Media Sa, 2024) Alrubaye, Zainab; Mughrabi, Moaaz Hudhud; Manav, Banu; Batmaz, Anil Ufuk; Hudhud Mughrabi, MoaazMirror drawing is a motor learning task that is used to evaluate and improve eye-hand coordination of users and can be implemented in immersive Virtual Reality (VR) Head-Mounted Displays (HMDs) for training purposes. In this paper, we investigated the effect of color cues on user motor performance in a mirror-drawing task between Virtual Environment (VE) and Real World (RW), with three different colors. We conducted a 5-day user study with twelve participants. The results showed that the participants made fewer errors in RW compared to VR, except for pre-training, which indicated that hardware and software limitations have detrimental effects on the motor learning of the participants across different realities. Furthermore, participants made fewer errors with the colors close to green, which is usually associated with serenity, contentment, and relaxation. According to our findings, VR headsets can be used to evaluate participants' eye-hand coordination in mirror drawing tasks to evaluate the motor-learning of participants. VE and RW training applications could benefit from our findings in order to enhance their effectiveness.Article Haptic-Assisted Soldering Training Protocol in Virtual Reality: The Impact of Scaffolded Guidance(Institute of Electrical and Electronics Engineers Inc., 2025) Yilmaz, M.; Batmaz, A.U.; Sarac, M.In this paper, we present a virtual training platform for soldering based on immersive visual feedback (i.e., a Virtual Reality (VR) headset) and scaffolded guidance (i.e., disappearing throughout the training) provided through a haptic device (Phantom Omni). We conducted a between-subject user study experiment with four conditions (2D monitor with no guidance, VR with no guidance, VR with constant, active guidance, and VR with scaffolded guidance) to evaluate their performance in terms of procedural memory, motor skills in VR, and skill transfer to real life. Our results showed that the scaffolded guidance offers the most effective transitioning from the virtual training to the real-life task — even though the VR with no guidance group has the best performance during the virtual training. These findings are critical for the industry and academy looking for safer and more effective training techniques, leading to better learning outcomes in real-life implementations. Furthermore, this work offers new insights into further haptic research in skill transfer and learning approaches while offering information on the possibilities of haptic-assisted VR training for complex skills, such as welding and medical stitching. © 2025 Elsevier B.V., All rights reserved.Conference Object Citation - WoS: 3Citation - Scopus: 6Effect of Grip Style on Peripersonal Target Pointing in Vr Head Mounted Displays(Ieee Computer Soc, 2023) Batmaz, Anil Ufuk; Turkmen, Rumeysa; Sarac, Mine; Machuca, Mayra Donaji Barrera; Stuerzlinger, WolfgangWhen working in Virtual Reality (VR), the user's performance is affected by how the user holds the input device (e.g., controller), typically using either a precision or a power grip. Previous work examined these grip styles for 3D pointing at targets at different depths in peripersonal space and found that participants had a lower error rate with the precision grip but identified no difference in movement speed, throughput, or interaction with target depth. Yet, this previous experiment was potentially affected by tracking differences between devices. This paper reports an experiment that partially replicates and extends the previous study by evaluating the effect of grip style on the 3D selection of nearby targets with the same device. Furthermore, our experiment re-investigates the effect of the vergence-accommodation conflict (VAC) present in current stereo displays on 3D pointing in peripersonal space. Our results show that grip style significantly affects user performance. We hope that our results are useful for researchers and designers when creating virtual environments.Conference Object Citation - WoS: 5Citation - Scopus: 10EyeGuide & EyeConGuide: Gaze-based Visual Guides to Improve 3D Sketching Systems(Assoc Computing Machinery, 2024) Turkmen, Rumeysa; Gelmez, Zeynep Ecem; Batmaz, Anil Ufuk; Stuerzlinger, Wolfgang; Asente, Paul; Sarac, Mine; Machuca, Mayra Donaji BarreraVisual guides help to align strokes and raise accuracy in Virtual Reality (VR) sketching tools. Automatic guides that appear at relevant sketching areas are convenient to have for a seamless sketching with a guide. We explore guides that exploit eye-tracking to render them adaptive to the user's visual attention. EYEGUIDE and EYECONGUIDE cause visual grid fragments to appear spatially close to the user's intended sketches, based on the information of the user's eye-gaze direction and the 3D position of the hand. Here we evaluated the techniques in two user studies across simple and complex sketching objectives in VR. The results show that gaze-based guides have a positive effect on sketching accuracy, perceived usability and preference over manual activation in the tested tasks. Our research contributes to integrating gaze-contingent techniques for assistive guides and presents important insights into multimodal design applications in VR.

- «

- 1 (current)

- 2

- 3

- »